- Published on

Training an AI Animator

Sam L'Huillier. Supervised by Dr. Tania Stathaki. Imperial College London. June 2022

TL;DR:

In this project, I built an AI cartoon generator using the Flintstones dataset. I built it as part of my final year project at Imperial College London. It's one of the very first examples of work that uses deep neural networks to generate animated video.

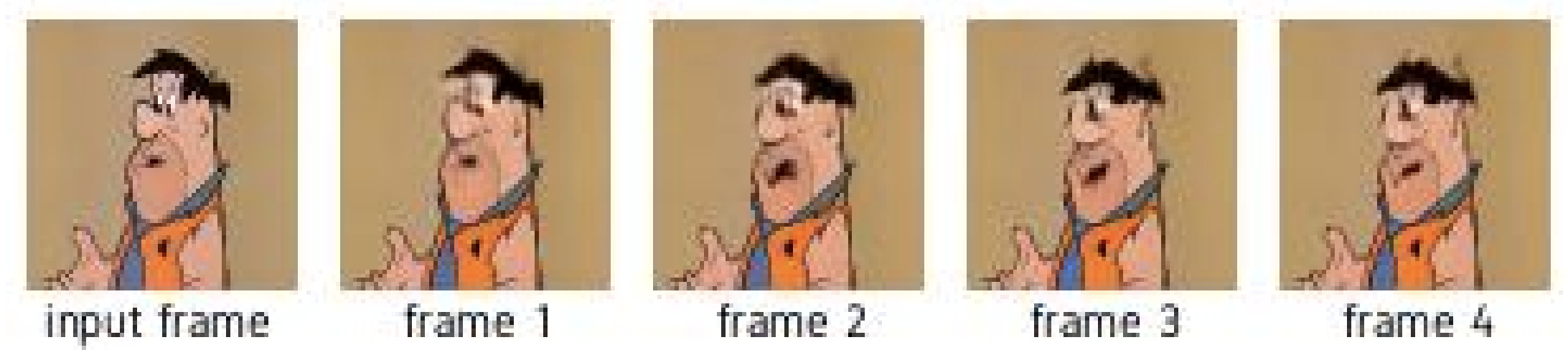

The project explores mainly autoregressive transformer models and diffusion models - all with the aim of generating temporally coherent animations. Give the model an input frame and it'll generate 4 video frames that would follow that input.

The combination of a discrete VAE and GPT2-like model are used to do video prediction and diffusion models are used for unconditional generation.

Check out the results below...

Results

Using GPT2-like architecture combined with a discrete VAE to downsample videos.

The model takes in an input frame and generates 4 video frames that follow the input...

Unconditional Generation with diffusion models: